Artificial intelligence (AI) has grown unprecedentedly over the last decade, transforming industries from healthcare to retail. But behind every successful AI model lies a robust foundation: data engineering. Rapid advancements in AI would not have been possible without the pivotal role of data engineering, which ensures that data is collected, processed, and delivered to robust intelligent systems.

The saying “garbage in, garbage out” has never been more relevant. AI models are only as good as the data that feeds them, making data engineering for AI a critical component of modern machine learning pipelines.

Why Data Engineering Is the Driving Force of AI

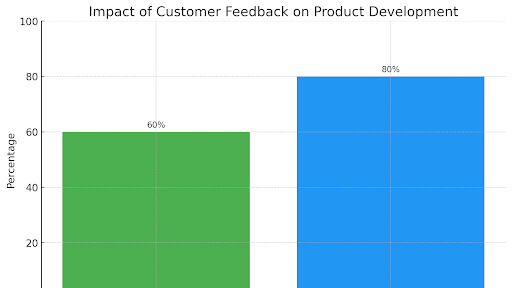

Did you know that 80% of a data scientist’s time is spent preparing data rather than building models? Forbes’s statistics underscore the critical importance of data engineering in AI workflows. Without well-structured, clean, and accessible data, even the most advanced AI algorithms can fail.

In the following sections, we’ll explore each component more profoundly and explore how data engineering for AI is evolving to meet future demands.

Overview: The Building Blocks of Data Engineering for AI

Understanding the fundamental elements that comprise contemporary AI data pipelines is crucial to comprehending the development of data engineering in AI:

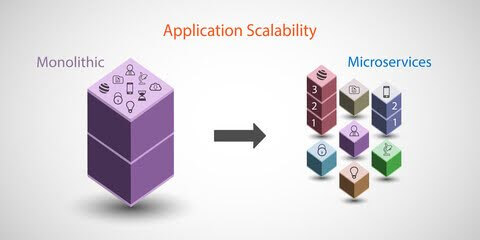

- ETL (Extract, Transform, Load) is the widely understood convention of extracting data from different sources, converting it into a system table, and then transferring it to a data warehouse. This method prioritizes data quality and structure before making it accessible for analysis or AI models.

- ELT (Extract, Load, Transform): As cloud-based data lakes and modern storage solutions gained prominence, ELT emerged as an alternative to ETL. With ELT, data is first extracted and loaded into a data lake or warehouse, where transformations occur after it is stored. This approach allows for real-time processing and scalability, making it ideal for handling large datasets in AI workflows.

Why These Components Matter

- ETL permits accurate and formatted data information necessary for a perfect AI forecast.

- ELT caters to the increasing requirements of immediate data processing and managing big data.

The Rise of Feature Stores in AI

Visualize the source for all the features utilized in the machine learning models you have developed. On the other hand, the Hanaa feature storage store is a unique system that stores, provides, and guarantees that features are always up to date.

Benefits of Feature Stores

- Streamlined Feature Engineering:

- No more reinventing the wheel! Feature stores allow data scientists to reuse and share features easily across different projects.

- Able to decrease significantly the amount of time and energy dedicated to feature engineering.

- Improved Data Quality and Consistency:

- Feature stores maintain a single source of features and, therefore, guarantee all the models in a modern ML organization access the correct features.

- However, it is beneficial to both models since they achieve better accuracy and higher reproducibility of the outcomes.

- Accelerated Model Development:

- Thanks to this capability, data scientists can more easily extract and modify various elements of such data to create better models.

- Thanks to this capability, data scientists can more easily extract and modify various elements of such data to create better models.

- Improved Collaboration:

- Feature stores facilitate collaboration between data scientists, engineers, and business analysts.

- Feature stores facilitate collaboration between data scientists, engineers, and business analysts.

- Enhanced Model Explainability:

- Feature stories can help improve model explainability and interpretability by tracking feature lineage. Since feature stores can track feature lineage, the two concepts can improve model explanations and interpretations.

Integrating ETL/ELT Processes with Feature Stores

ETL/ELT pipelines are databases that store, process, and serve data and features for Machine Learning. They ensure that AI models get good, clean data to train and predict. ETL/ELT pipelines should also be linked with feature stores to ensure a smooth, efficient, centralized data-to-model pipeline.

Workflow Integration

That means you should visualize an ideal pipeline in which the data is neither stuck, manipulated, or lost but directly fed to your machine-learning models. This is where ETL/ELT processes are combined with feature stores active.

- ETL/ELT as the Foundation: ETL or ELT processes are the backbone of your data pipeline. They extract data from various sources (databases, APIs, etc.), transform it into a usable format, and load it into a data lake or warehouse.

- Feeding the Feature Store: It flows into the feature store once data is loaded. The data is further processed, transformed, and enriched to create valuable features for your machine-learning models.

- On-demand Feature Delivery: The feature store then provides these features to your model training and serving systems to ensure they stay in sync and are delivered efficiently. Learn the kind of data engineering that would glide straightforwardly from origin to your machine learning models. This is where ETL/ELT and feature stores come into the picture.

Best Practices for Integration

- Data Quality Checks: To ensure data accuracy and completeness, rigorous data quality checks should be implemented at every ETL/ELT process stage.

- Data Lineage Tracking: Track the origin and transformations of each feature to improve data traceability and understandability.

- Version Control for Data Pipelines: Use tools like Debt (a data build tool) to control data transformations and ensure reproducibility.

- Continuous Monitoring: Continuously monitor data quality and identify any data anomalies or inconsistencies.

- Scalability and Performance: Optimize your ETL/ELT processes for performance and scalability to handle large volumes of data engineering.

Case Studies: Real-World Implementations of ETL/ELT Processes and Feature Stores in Data Engineering for AI

In the modern context of the global data engineering hype, data engineering for AI is vital to drive organizations to assess how data can be processed, stored, and delivered to support the following levels of machine learning and AI uses.

Businesses are leading cutting-edge work in AI by incorporating ETL/ELT processes into strategic coupling with feature stores. Further, we discuss examples of successful implementation and what it led to in the sections below.

1. Uber: Powering Real-Time Predictions with Feature Stores

Uber developed its Michelangelo Feature Store to streamline its machine learning workflows. The feature store integrates with ELT pipelines to extract and load data from real-time sources like GPS sensors, ride requests, and user app interactions. The data is then transformed and stored as features for models predicting ride ETAs, pricing, and driver assignments.

Outcomes

- Reduced Latency: The feature store enabled the serving of features in real-time, reducing the latencies with AI predictions by a quarter.

- Increased Model Reusability: Feature reuse in data engineering pipelines allowed for the development of multiple models, improving development efficiency by up to 30%.

- Improved Accuracy: The models with real-time features fared better due to higher accuracy and thus enhanced performance regarding rider convenience and efficient ride allocation.

Learnings

- Real-time ELT processes integrated with feature stores are crucial for applications requiring low-latency predictions.

- Centralized feature stores eliminate redundancy, enabling teams to collaborate more effectively.

2. Netflix: Enhancing Recommendations with Scalable Data Pipelines

ELT pipelines are also used at Netflix to handle numerous records, such as watching history/queries and ratings from the user. The processed data go through the feature store, and the machine learning models give the user recommendation content.

Outcomes

- Improved User Retention: Personalized recommendations contributed to Netflix’s 93% customer retention rate.

- Scalable Infrastructure: ELT pipelines efficiently handle billions of daily data points, ensuring scalability as user data grows.

- Enhanced User Experience: Feature stores improved recommendations’ accuracy, increasing customer satisfaction and retention rates.

Learnings

- The ELT pipeline is a contemporary computational feature of data warehouses, making it ideal for organizations that create and manage large datasets.

- From these, feature stores maintain high and consistent feature quality in the training and inference phases, helping improve the recommendation models.

3. Airbnb: Optimizing Pricing Models with Feature Stores

Airbnb integrated ELT pipelines with a feature store to optimize its dynamic pricing models. Data from customer searches, property listings, booking patterns, and seasonal trends was extracted, loaded into a data lake, and transformed into features for real-time pricing algorithms.

Outcomes

- Dynamic Pricing Efficiency: Models could adjust prices in real time, increasing bookings by 20%.

- Time Savings: Data engineering reduced model development time by 40% by reusing curated features.

- Scalability: ELT pipelines enabled Airbnb to process data engineering across millions of properties globally without performance bottlenecks.

Learnings

- Reusable features reduce duplication of effort, accelerating the deployment of new AI models.

- Integrating the various ELT processes with feature stores by AI applications promotes the global scaling of AI implementation processes and dynamic characteristics.

4. Spotify: Personalizing Playlists with Centralized Features

Spotify utilizes ELT pipelines to consolidate users’ data from millions of touchpoints daily, such as listening, skips, and searches. This data is transformed and stored in a feature store to power its machine-learning models for personalized playlists like “Discover Weekly.”

Outcomes

- Higher Engagement: Personalized playlists increased user engagement, with Spotify achieving a 70% user retention rate.

- Reduced Time to Market: Centralized feature stores allowed rapid experimentation and deployment of new recommendation models.

- Scalable AI Workflows: ELT scalable pipelines processed terabytes of data daily, ensuring real-time personalization for millions of users.

Learnings

- Centralized feature stores simplify feature management, improving the efficiency of machine learning workflows.

- ELT pipelines are essential for processing high-volume user interaction data engineering at scale.

5. Walmart: Optimizing Inventory with Data Engineering for AI

Walmart employs ETL pipelines and feature stores to optimize inventory management using predictive analytics. Data from sales transactions, supplier shipments, and seasonal trends is extracted, transformed into actionable features, and loaded into a feature store for AI models.

Outcomes

- Reduced Stockouts: This caused improved inventory availability and stockout levels, which were reduced by 30% with the help of an established predictive model.

- Cost Savings: We overcame many issues related to inventory processes and reduced operating expenses by 20%.

- Improved Customer Satisfaction: The system’s real-time information, supported by AI, helped Walmart satisfy customers’ needs.

Learnings

- ETL pipelines are ideal for applications requiring complex transformations before loading into a feature store.

- Data engineering for AI enables actionable insights that drive both cost savings and customer satisfaction.

Conclusion

Data engineering is the cornerstone of AI implementation in organizations and still represents a central area of progress for machine learning today. Technologies such as modern feature stores, real-time ELT, and AI in data management will revolutionize the data operations process.

The combination of ETL/ELT with feature stores proved very effective in increasing scalability, offering real-time opportunities, and increasing model performance across industries.

This is because current processes are heading towards a more standardized, cloud-oriented outlook with increased reliance on automation tools to manage the growing data engineering challenge.

Feature stories will emerge as strategic knowledge repositories that store and deploy features. To the same extent, ETL and ELT business practices must transform in response to real-time and significant data concerns.

Consequently, organizations must evaluate the state of data engineering and adopt new efficiencies that drive data pipelines to adapt to the constantly changing environment and remain relevant effectively.

They must also insist on the quality of outcomes and empower agility in AI endeavors. Current investment in scalable data engineering will enable organizations to future-proof and leverage AI for competitive advantage tomorrow.

FAQs

1. What is the difference between ETL and ELT in data engineering for AI?

ETL (Extract, Transform, Load) transforms data before loading it into storage. In contrast, ELT (Extract, Load, Transform) loads raw data into storage and then transforms it, leveraging modern cloud-based data warehouses for scalability.

2. How do feature stores improve AI model performance?

Feature stores centralize and standardize the storage, retrieval, and serving of features for machine learning models. They ensure consistency between training and inference while reducing duplication of effort.

3. Why are ETL and ELT critical for AI workflows?

ETL and ELT are essential for cleaning, transforming, and organizing raw data into a usable format for AI models. They streamline data pipelines, reduce errors, and ensure high-quality inputs for training and inference.

4. Can feature stores handle real-time data for AI applications?

Modern feature stores like Feast and Tecton are designed to handle real-time data, enabling low-latency AI predictions for applications like fraud detection and recommendation systems.

How can [x]cube LABS Help?

[x]cube has been AI native from the beginning, and we’ve been working with various versions of AI tech for over a decade. For example, we’ve been working with Bert and GPT’s developer interface even before the public release of ChatGPT.

One of our initiatives has significantly improved the OCR scan rate for a complex extraction project. We’ve also been using Gen AI for projects ranging from object recognition to prediction improvement and chat-based interfaces.

Generative AI Services from [x]cube LABS:

- Neural Search: Revolutionize your search experience with AI-powered neural search models. These models use deep neural networks and transformers to understand and anticipate user queries, providing precise, context-aware results. Say goodbye to irrelevant results and hello to efficient, intuitive searching.

- Fine-Tuned Domain LLMs: Tailor language models to your specific industry for high-quality text generation, from product descriptions to marketing copy and technical documentation. Our models are also fine-tuned for NLP tasks like sentiment analysis, entity recognition, and language understanding.

- Creative Design: Generate unique logos, graphics, and visual designs with our generative AI services based on specific inputs and preferences.

- Data Augmentation: Enhance your machine learning training data with synthetic samples that closely mirror accurate data, improving model performance and generalization.

- Natural Language Processing (NLP) Services: Handle sentiment analysis, language translation, text summarization, and question-answering systems with our AI-powered NLP services.

- Tutor Frameworks: Launch personalized courses with our plug-and-play Tutor Frameworks. These frameworks track progress and tailor educational content to each learner’s journey, making them perfect for organizational learning and development initiatives.

Interested in transforming your business with generative AI? Talk to our experts over a FREE consultation today!

1-800-805-5783

1-800-805-5783