The concept of digital twins stands at the forefront of revolutionizing product development. Digital twins serve as virtual replicas of physical objects, bridging the gap between the tangible and the digital.

They represent a powerful convergence of data, analytics, and simulation, offering unprecedented insights and opportunities for optimization. As businesses strive to stay ahead in a competitive landscape, digital twins have emerged as indispensable assets, driving innovation and efficiency across various industries.

This blog explores the transformative role of digital twins in modern product development, dissecting their definition, significance, and practical applications. From understanding the core concept of digital twins to unraveling their profound impact on optimizing design processes and enhancing product performance, this exploration aims to showcase their pivotal role in shaping the future of innovation.

What are Digital Twins?

By definition, digital twins are virtual replicas of physical objects, processes, or systems created and maintained using real-time data and simulation algorithms. These digital replicas, known as digital twins, are synchronized with their physical counterparts, allowing for continuous monitoring, analysis, and optimization.

A. Evolution and history of digital twins:

The concept of digital twins has evolved from its origins in manufacturing and industrial automation. Initially introduced by Dr. Michael Grieves at the University of Michigan in 2003, digital twins have since matured into a widely adopted technology across various industries such as aerospace, automotive, healthcare, and more.

B. Key components and characteristics of digital twins:

Digital twins comprise several vital components and characteristics, including:

- Data integration: Real-time data from sensors, IoT devices, and other sources are integrated to represent the physical object or system accurately.

- Simulation and modeling: Advanced simulation and modeling techniques replicate the physical counterpart’s behavior, performance, and interactions.

- Analytics and insights: Data analytics algorithms analyze the synchronized data to provide actionable insights for decision-making and optimization.

- Continuous synchronization: Digital twins are continuously updated and synchronized with their physical counterparts to ensure real-time accuracy and relevance.

C. Digital twins examples in various industries:

Digital twins are being utilized across diverse sectors for a wide range of applications, including:

- Manufacturing: Digital twins of production lines and equipment enable predictive maintenance, process optimization, and quality control.

- Healthcare: Patient-specific digital twins support personalized treatment planning, medical device design, and virtual surgery simulations.

- Smart cities: Digital twins of urban infrastructure facilitate efficient city planning, traffic management, and disaster response.

- Aerospace: Digital twins of aircraft components and systems support predictive maintenance, performance optimization, and fuel efficiency enhancements.

In summary, digital twins represent a transformative technology that enables organizations to gain deeper insights, improve decision-making, and optimize performance across various domains. This ultimately drives innovation and efficiency in product development and beyond.

Bridging the Physical and Digital Worlds

A. Explanation of how digital twins bridge the gap between physical objects and their virtual counterparts

Digital twins serve as a transformative bridge, seamlessly connecting physical objects with their virtual counterparts in the digital realm. At the core of this synergy lies the concept of replication and synchronization.

A digital twin is a virtual representation of a physical entity, meticulously crafted to mirror its real-world counterpart in structure, behavior, and functionality. Through this digital replica, stakeholders gain unprecedented insights and control over physical assets, unlocking many opportunities for innovation and optimization.

B. Importance of real-time data synchronization

Real-time data synchronization plays a pivotal role in ensuring the fidelity of digital twins. By continuously feeding data from IoT sensors embedded within physical objects, digital twins remain dynamically updated, reflecting their physical counterparts’ latest changes and conditions.

This constant flow of information enables stakeholders to monitor, analyze, and respond to real-world events proactively and informally, maximizing efficiency and minimizing downtime.

C. Role of IoT sensors and data analytics in maintaining digital twins

IoT sensors and data analytics are the backbone of digital twins, empowering them to thrive in the digital ecosystem. These sensors act as the eyes and ears of digital twins, capturing a wealth of data about the physical environment, performance metrics, and operational parameters.

Leveraging advanced analytics techniques, this data is processed, contextualized, and transformed into actionable insights, driving informed decision-making and facilitating predictive maintenance strategies.

D. Benefits of having a digital twin for physical objects

The benefits of embracing digital twins for physical objects are manifold. By providing a digital replica that mirrors the intricacies of its physical counterpart, digital twins offer stakeholders a virtual sandbox for experimentation and optimization.

Through simulations and predictive modeling, designers and engineers can iteratively refine product designs, fine-tune performance parameters, and anticipate potential issues before they manifest in the physical realm.

Furthermore, digital twins empower stakeholders with enhanced visibility, control, and agility, enabling them to adapt and respond swiftly to changing market demands and operational challenges.

Digital Twins in Product Development

A. Application of Digital Twins in Product Design and Prototyping:

Digital twins revolutionize product design and prototyping by providing real-time insights and simulations. Through the virtual representation of physical objects, designers can experiment with different configurations, materials, and scenarios, optimizing designs before physical prototypes are even produced.

This iterative approach fosters creativity and innovation during the design phase by reducing the risk of errors and saving time and resources.

B. Utilization of Digital Twins for Predictive Maintenance and Performance Optimization:

One of the hallmark advantages of digital twins is their ability to facilitate predictive maintenance and performance optimization. By continuously monitoring and analyzing data from the physical counterpart, digital twins, powered by digital twins software, can predict potential issues, schedule maintenance proactively, and optimize performance parameters in real-time.

This proactive strategy significantly reduces business expenses by reducing downtime, extending the life of assets, and improving overall operational efficiency.

C. Enhancing Collaboration Between Design Teams and Stakeholders Through Digital Twins:

Digital twins are a common platform for collaboration, enabling seamless communication and alignment between design teams and stakeholders. With access to a shared virtual model, stakeholders can provide feedback, review designs, and make informed decisions collaboratively.

Improved collaboration leads to better product outcomes by streamlining the decision-making process, minimizing misunderstandings, and guaranteeing that all parties work toward the same goal.

D. Case Studies Showcasing Successful Implementation of Digital Twins in Product Development:

Digital twins, virtual replicas of physical assets, are revolutionizing product engineering. They empower businesses to optimize design, predict issues, and accelerate innovation by simulating real-world performance and behavior. Let’s explore compelling case studies showcasing the successful implementation of digital twins:

1. Rolls-Royce and the Trent XWB Engine:

Challenge: Develop a new jet engine, the Trent XWB, for the Airbus A350 XWB aircraft, ensuring optimal performance and fuel efficiency.

Solution: Rolls-Royce created a high-fidelity digital twin of the engine, incorporating data from various sources, such as sensor readings, design models, and historical performance data.

Impact:

- Reduced development time by 50%: The digital twin enabled virtual testing of countless scenarios, optimizing design decisions and identifying potential issues early.

- Improved engine performance: The digital twin facilitated the creation of an engine with superior fuel efficiency and lower emissions.

- Enhanced maintenance: The digital twin predicts maintenance needs and optimizes service schedules, reducing downtime and costs.

2. GE Aviation and the LEAP Engine:

Challenge: Design and manufacture the LEAP engine, a new fuel-efficient engine for single-aisle aircraft, within a tight timeframe and budget.

Solution: GE Aviation leveraged a digital twin throughout the development process, simulating various operating conditions and analyzing performance data.

Impact:

- Reduced development costs by 20%: The digital twin facilitated efficient design iterations and eliminated the need for extensive physical prototyping.

- Shorter time to market: The virtual testing and optimization enabled faster development and timely engine delivery.

- Improved engine reliability: The digital twin helped identify and address potential reliability issues before production, leading to a more robust engine design.

3. BMW and the iNext Electric Vehicle:

Challenge: Develop the electric vehicle model with advanced features like autonomous driving capabilities.

Solution: BMW employed a digital twin of the iNext throughout the development process, integrating data from simulations, real-world testing, and user feedback.

Impact:

- Enhanced safety and functionality: The digital twin facilitated the virtual testing of various autonomous driving scenarios, ensuring safety and refining functionality.

- Optimized vehicle performance: The digital twin enabled simulations to optimize battery range, power management, and overall vehicle performance.

- Faster development and testing: Virtual testing allowed for quicker iterations and efficient integration of user feedback, accelerating development cycles.

These case studies demonstrate the transformative potential of digital twins in product development. By enabling virtual testing, optimizing design, and predicting potential issues, digital twins empower businesses to:

- Reduce development costs and time to market

- Improve product performance and reliability

- Gain a competitive edge through innovation

As the technology matures and adoption grows, digital twins are poised to become an indispensable tool for businesses to navigate the ever-evolving landscape of product development.

Challenges and Future Trends

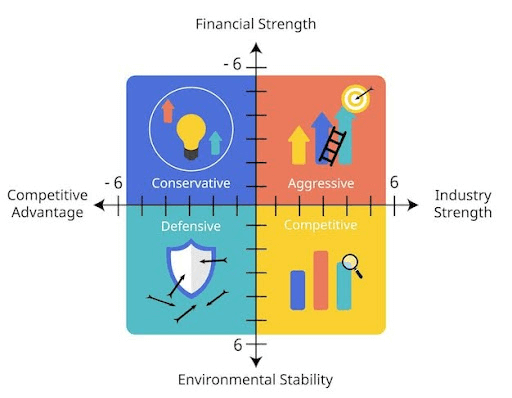

A. Common Challenges Faced in Implementing Digital Twins:

- Data Integration: Integrating data from various sources into a cohesive digital twin environment can be complex, requiring compatibility and standardization.

- Security Concerns: Ensuring the security and privacy of sensitive data within digital twin systems presents a significant challenge, particularly with the interconnected nature of IoT devices.

- Scalability: Scaling digital twin systems to accommodate large-scale deployments and diverse use cases while maintaining performance and efficiency can be daunting.

- Interoperability: Achieving seamless interoperability between different digital twin platforms and technologies is essential for maximizing their potential across industries.

- Skill Gap: Addressing the need for more skilled professionals capable of designing, implementing, and managing digital twin ecosystems poses a considerable challenge for organizations.

B. Emerging Trends and Advancements in Digital Twin Technology:

- Edge Computing: Leveraging edge computing capabilities to process data closer to the source enables real-time insights and reduces latency, enhancing the effectiveness of digital twins.

- AI and Machine Learning: Integrating artificial intelligence (AI) and machine learning algorithms empowers digital twins to analyze vast amounts of data, predict outcomes, and optimize performance autonomously.

- Blockchain Integration: Incorporating blockchain technology enhances the security, transparency, and integrity of data exchanged within digital twin ecosystems, mitigating risks associated with data tampering.

- 5G Connectivity: The advent of 5G networks facilitates faster data transmission and lower latency, enabling more responsive and immersive experiences within digital twin environments.

- Digital Twin Marketplaces: Developing digital twin marketplaces and ecosystems fosters collaboration, innovation, and the exchange of digital twin models and services across industries.

C. Potential Impact of Digital Twins on Future Product Development Strategies:

- Agile Development: Digital twins enable iterative and agile product development processes by providing real-time feedback, simulation capabilities, and predictive insights, reducing time-to-market and enhancing product quality.

- Personalized Products: Leveraging digital twins to create customized product experiences tailored to individual preferences and requirements fosters customer engagement, loyalty, and satisfaction.

- Sustainable Innovation: By simulating the environmental impact of products and processes, digital twins empower organizations to adopt sustainable practices, minimize waste, and optimize resource utilization.

- Predictive Maintenance: Proactive maintenance enabled by digital twins helps organizations anticipate and prevent equipment failures, minimize downtime, and extend the lifespan of assets, resulting in cost savings and operational efficiency.

- Collaborative Design: Digital twins facilitate collaborative design and co-creation efforts among cross-functional teams, stakeholders, and partners, fostering innovation, creativity, and knowledge sharing throughout the product development lifecycle.

Also read The Ultimate Guide to Product Development: From Idea to Market.

Conclusion

As businesses navigate the complexities of modern product development, adopting digital twins emerges as a game-changing strategy for innovation and efficiency. Embracing digital twins unlocks a world of possibilities, enabling organizations to streamline design processes, optimize performance, and drive unparalleled innovation.

By leveraging the power of digital twins, businesses can gain invaluable insights into their products’ behavior, anticipate maintenance needs, and iterate rapidly to meet evolving market demands.

Take advantage of the opportunity to revolutionize your product development strategy. Explore digital twin adoption today and propel your organization towards enhanced innovation, efficiency, and success in the digital age.

How can [x]cube LABS Help?

[x]cube LABS’s teams of product owners and experts have worked with global brands such as Panini, Mann+Hummel, tradeMONSTER, and others to deliver over 950 successful digital products, resulting in the creation of new digital revenue lines and entirely new businesses. With over 30 global product design and development awards, [x]cube LABS has established itself among global enterprises’ top digital transformation partners.

Why work with [x]cube LABS?

- Founder-led engineering teams:

Our co-founders and tech architects are deeply involved in projects and are unafraid to get their hands dirty.

- Deep technical leadership:

Our tech leaders have spent decades solving complex technical problems. Having them on your project is like instantly plugging into thousands of person-hours of real-life experience.

- Stringent induction and training:

We are obsessed with crafting top-quality products. We hire only the best hands-on talent. We train them like Navy Seals to meet our standards of software craftsmanship.

- Next-gen processes and tools:

Eye on the puck. We constantly research and stay up-to-speed with the best technology has to offer.

- DevOps excellence:

Our CI/CD tools ensure strict quality checks to ensure the code in your project is top-notch.

Contact us to discuss your digital innovation plans, and our experts would be happy to schedule a free consultation.

1-800-805-5783

1-800-805-5783