1-800-805-5783

1-800-805-5783

For the better part of the last decade, our interaction with artificial intelligence has been confined behind screens.

We have marveled at Large Language Models that can draft essays, generate code, and synthesize vast amounts of data in seconds.

However, as we navigate through 2026, a new and more tangible frontier has emerged that moves intelligence out of the digital cloud and into the physical environment. This paradigm shift is known as physical AI.

If generative AI is the brain, then physical AI is the body that allows that brain to interact with, move through, and manipulate the physical world.

It represents the intersection of advanced machine learning, robotics, and sensor technology. While digital AI thrives in the world of bits and bytes, this new evolution is designed to master the world of atoms.

Understanding the nuances of this technology is essential for grasping the next wave of industrial and consumer innovation.

To understand what makes this technology unique, we must look at how it differs from the software-centric models we have used previously. Physical AI operates through a continuous feedback loop that involves three critical stages: sensing, reasoning, and actuation.

A digital AI receives its input via text or uploaded files. In contrast, physical AI perceives the world through a vast array of sensors, including LiDAR, high-resolution cameras, haptic sensors, and ultrasonic arrays.

In 2026, these systems use sensor fusion to create a real-time, three-dimensional understanding of their surroundings.

This is not just about seeing an object; it is about understanding its weight, texture, and structural integrity before ever making contact.

The “intelligence” in these systems is grounded in what researchers call World Models. Unlike a language model that predicts the next word in a sentence, a world model predicts the physical consequences of an action.

If a robot pushes a glass of water, the physical AI must predict whether the glass will slide, tip over, or shatter based on the surface friction and the force applied.

This predictive reasoning allows the system to navigate complex, unpredictable environments without needing a pre-programmed map for every scenario.

Actuation is where the intelligence becomes manifest. It involves the motors, hydraulics, and mechanical joints that allow the AI to move.

The breakthrough in 2026 has been the development of “End-to-End” learning, where the AI learns to control its limbs directly from its sensory input.

This removes the need for rigid, hand-coded instructions, allowing for fluid, human-like movements that can adapt to a slippery floor or a delicate object in real time.

While the concepts behind robotics have existed for years, several technological convergences have made 2026 the definitive year for the rise of physical AI.

First, the massive scale-up in computing power has allowed for Large Behavior Models (LBMs) to be trained on millions of hours of video and robotic trial-and-error data.

Second, the “Sim-to-Real” gap—the difficulty of transferring a model trained in simulation to the messy real world—has finally been bridged.

We now have high-fidelity simulations that accurately mimic gravity, friction, and fluid dynamics, allowing physical AI to undergo years of training in just a few weeks of digital time.

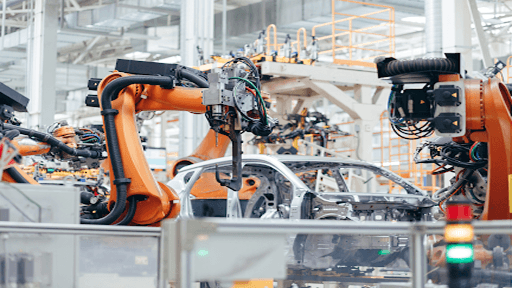

We are seeing a move away from “specialized” industrial robots that can only do one thing, such as a robotic arm on a car assembly line.

Today, the focus is on general-purpose humanoid robots powered by physical AI. These machines are designed to operate in spaces built for humans, using human tools and navigating human obstacles.

Whether it is restocking shelves in a retail environment or assisting in elder care, these generalists represent the most advanced application of physical intelligence to date.

| Feature | Digital AI (Generative) | Physical AI (Agentic) |

| Primary Environment | Servers and digital interfaces | The physical, 3D world |

| Input Type | Text, code, and images | Multi-sensory (LiDAR, Haptics, Vision) |

| Core Goal | Information processing and content | Physical task execution and movement |

| Feedback Loop | User prompts and responses | Sensor-motor interactions with the environment |

| Key Challenge | Hallucinations and factual accuracy | Safety, latency, and physical constraints |

The implementation of physical AI is transforming sectors where human labor was previously the only option for complex, non-repetitive tasks.

In the massive distribution centers of 2026, physical AI has replaced static conveyor belts with fleets of autonomous mobile robots.

These agents do not just follow lines on a floor; they navigate dynamic environments, avoiding human workers and optimizing their own paths in real time.

In manufacturing, robots powered by this intelligence can now handle soft or irregular materials—such as fabrics or food items—with a level of dexterity previously impossible.

In medicine, the role of physical AI is becoming a cornerstone of the modern operating room. Surgical robots are no longer just tools controlled by a doctor; they act as co-pilots with their own “tactile intelligence.”

They can compensate for a surgeon’s slight hand tremors or autonomously perform repetitive tasks like suturing with sub-millimeter precision, significantly improving patient outcomes and recovery times.

The consumer market is also seeing the impact. The vacuum robots of the past have evolved into home assistants capable of picking up clutter, loading dishwashers, and even performing light maintenance.

This leap in domestic utility is made possible because the physical AI can identify thousands of different household objects and understand how to handle them without breaking them.

Despite the rapid progress, the deployment of physical AI comes with a unique set of challenges that do not exist in the purely digital realm.

As we look toward 2027 and beyond, the distinction between “online” and “offline” will continue to blur. We are moving toward a future where intelligence is embodied in the world around us. Physical AI is the final step in the journey of artificial intelligence, taking it from a tool we talk to, to a partner that works alongside us.

The organizations that will lead the next decade are those that understand how to bridge the gap between their digital data and their physical operations. By giving AI a body, we are not just making machines more capable; we are fundamentally changing the way we interact with the world itself.

Physical AI is the integration of artificial intelligence with physical systems, such as robots or autonomous vehicles, allowing the AI to perceive, reason about, and interact with the three-dimensional world.

Traditional robotics often relies on pre-programmed, rigid instructions for specific tasks. Physical AI uses machine learning and world models to allow the robot to adapt to new, unpredictable situations and learn through experience.

World models are internal simulations used by the AI to predict the physical consequences of its actions. This allows the system to understand things like gravity, momentum, and friction, helping it navigate the world safely and efficiently.

The most common applications include autonomous logistics and delivery, advanced manufacturing, humanoid service robots, and precision surgical assistants in healthcare.

Safety is a primary focus of development. Modern systems use a combination of vision-based “spatial awareness” and mechanical “force-limiting” technology to ensure they can stop or move away if a human enters their immediate path.

The next few years will define how we govern and integrate these physical agents into our daily lives. As physical AI continues to mature, it will redefine the limits of human-machine collaboration.

At [x]cube LABS, we craft intelligent AI agents that seamlessly integrate with your systems, enhancing efficiency and innovation:

Integrate our Agentic AI solutions to automate tasks, derive actionable insights, and deliver superior customer experiences effortlessly within your existing workflows.

For more information and to schedule a FREE demo, check out all our ready-to-deploy agents here.