1-800-805-5783

1-800-805-5783

The conversation around artificial intelligence has shifted from basic automation to the sophisticated orchestration of autonomous agents.

We have seen these agents manage entire supply chains, conduct real-time fraud detection, and even assist in complex surgical procedures.

However, as the autonomy of these systems increases, so does the importance of a critical safety and governance framework; Human-in-the-Loop AI.

The goal of modern enterprise AI is not to remove the human from the equation but to redefine where that human provides the most value.

While an agentic system can process millions of data points in milliseconds, it often lacks the nuanced judgment, ethical grounding, and empathy required for high-stakes decisions.

Understanding when an agent should pause and seek human intervention is the defining challenge of the “Next Now” in business automation.

Human-in-the-Loop AI is a model that combines the computational power of machines with the seasoned intuition of human experts.

In an agentic workflow, this is not just a passive “approval” step at the end of a process. Instead, it is a dynamic interaction where the AI recognizes its own limitations and proactively requests assistance.

This framework is essential for maintaining “Meaningful Human Control” over autonomous systems.

By 2026, the industry will have realized that total “lights-out” automation in complex sectors like finance, healthcare, or law is not only risky but often non-compliant with emerging global regulations.

Human-in-the-Loop AI acts as the bridge that allows for high-velocity automation without sacrificing the safety net of human accountability.

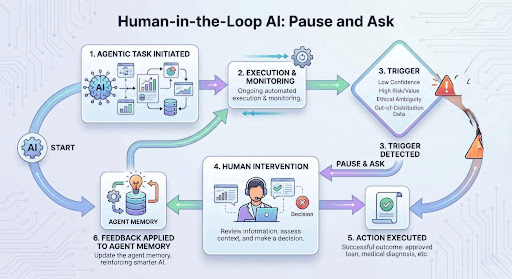

In a multi-agent ecosystem, “knowing what you don’t know” is a sign of a high-functioning system. Sophisticated agents are now programmed with specific “intervention triggers” that dictate when they should stop executing and wait for a human response.

The most basic trigger is a confidence score. If a diagnostic agent in a hospital identifies a rare pathology but the statistical confidence falls below a pre-set threshold, it must trigger Human-in-the-Loop AI. The agent presents its findings, the supporting evidence, and a clear request for verification. This ensures that the human expert spends their time on the most ambiguous cases rather than reviewing every routine scan.

AI agents operate on logic and data, but business and medicine often operate on ethics and context. If an insurance agent is processing a claim that is technically valid but involves a highly sensitive or tragic customer situation, the agent should pause. Human-in-the-Loop AI allows a human representative to step in and handle the communication with the empathy and discretion that a machine cannot yet replicate.

In the world of finance, many institutions set “financial guardrails” for their agents. While an agent might have the authority to execute trades or approve loans up to a certain dollar amount, any transaction exceeding that limit requires a human sign-off. This is not necessarily because the agent is wrong, but because the institutional risk is too high to be managed solely by a machine.

AI models are trained on historical data. When an agent encounters a “Black Swan” event—a scenario it has never seen before in its training set—its reasoning can become unpredictable. A robust Human-in-the-Loop AI architecture detects these “out-of-distribution” events and alerts a human specialist who can navigate the unprecedented situation using creative problem-solving.

In 2026, the interaction between human and machine is managed by specialized “Orchestration Agents.” These agents act as the interface between the autonomous workforce and the human managers.

When an agent pauses, it does not just send an alert. It provides a comprehensive “Context Memo.” This is a product of Explainable AI (XAI) and Human-in-the-Loop AI working together. The memo summarizes what the agent was trying to do, why it paused, and what specific decision it needs from the human. This reduces the “cognitive load” on the human expert, allowing them to provide the necessary guidance in seconds.

The human’s response is not just a binary “Yes” or “No.” It serves as a new data point. Through reinforcement learning from human feedback (RLHF), the agent learns from the human’s intervention.

Over time, the agent’s confidence in similar scenarios increases, allowing the system to become more autonomous while still operating under the strict guidance of the human-in-the-loop AI framework.

In banking, agentic systems manage everything from credit scoring to fraud detection. However, if a fraud agent starts flagging an unusually high number of legitimate transactions, it signals “model drift.”

Human-in-the-Loop AI allows a risk officer to pause the agent, investigate the cause of the false positives, and re-calibrate the agent’s logic before it impacts thousands of customers.

In clinical settings, the AI serves as a co-pilot. During a complex robotic surgery, a physical AI agent might handle the routine suturing, but if it detects an unexpected anatomical variation, it instantly hands over full control to the surgeon. This synergy ensures that the speed of the machine is always guided by the life-saving experience of the human.

In e-commerce, product discovery agents can handle 90% of customer requests. But if a customer has a highly specific, complex query about a product’s sustainability or origin that the agent cannot verify with 100% certainty, the system seamlessly transitions the chat to a human brand expert. This prevents the “hallucinations” that can damage brand trust.

A common concern for enterprise leaders is that Human-in-the-Loop AI will slow down their operations. However, the data from 2026 suggests that the “hybrid model” is actually more efficient in the long run.

By automating the “boring” and high-volume tasks while reserving humans for the high-value “exceptions,” organizations can scale their output without increasing their risk profile. The cost of a human “pause” is negligible compared to the astronomical cost of an autonomous error that results in a regulatory fine, a medical malpractice suit, or a massive financial loss.

| Automation Level | Strategy | Role of Human-in-the-Loop AI |

| Fully Autonomous | High-volume, low-risk | Periodic auditing only |

| Agentic Assistance | Semi-complex workflows | Real-time monitoring and verification |

| Human-Led AI | High-stakes / Ethical decisions | Constant oversight and final approval |

By 2026, global frameworks like the EU AI Act and US executive orders have made Human-in-the-Loop AI a legal requirement for “High-Risk AI Systems.” These laws mandate that for certain sectors, there must be a “kill switch” and a documented path for human intervention.

Enterprises are now adopting “Human-Centric AI Charters,” which define the specific conditions under which an agent must pause. These charters are not just technical documents; they are ethical promises to customers and regulators that the brand will never allow a machine to make a life-altering decision without a human safety net in place.

The evolution of agentic AI is not leading us toward a world without humans; it is leading us toward a world of super-powered humans.

Human-in-the-Loop AI is the framework that makes this possible. It allows us to harness the incredible speed and scale of autonomous agents while ensuring that our systems remain grounded in human values, ethics, and common sense.

As we look toward 2027, the goal for every forward-thinking organization should be to build agents that are smart enough to do the work but wise enough to know when to ask for help. In that partnership, we find the true promise of artificial intelligence.

The main benefit is the reduction of risk. By ensuring that a human expert is available to handle complex, high-stakes, or ambiguous situations, organizations can prevent the errors and biases that sometimes occur in fully autonomous systems.

For 90% of tasks, the AI handles them autonomously, with no slowdown. For the remaining 10% that require a human, there is a slight delay, but this is a necessary trade-off for the safety and accuracy of the final decision.

Agents are programmed with “intervention triggers,” which include low confidence scores, high-risk financial thresholds, or the detection of “out-of-distribution” data that the agent hasn’t encountered in its training.

In many jurisdictions and for “high-risk” industries like healthcare and finance, regulations are increasingly mandating a degree of human oversight and a “right to explanation” for all AI-driven decisions.

Implementation starts with defining your “risk appetite” and your “escalation logic.” You need to identify which decisions are safe for total automation and which require the unique judgment of your human staff.

We help enterprises become AI-native; not by adding AI on top of existing systems, but by rebuilding the intelligence layer from the ground up. With 950+ products shipped and $5B+ in value created for clients across 15+ industries, here is what we bring to the table:

We design and deploy agentic AI systems that sense, decide, and act without human bottlenecks, handling complex, multi-step workflows end-to-end with measurable resolution rates and no manual intervention.

Our voice platformEllo puts production-ready voice agents in front of your customers in minutes. Zero-latency conversations across 30+ languages, with no call centers and no wait times.

We replace manual, error-prone workflows with intelligent automation across invoicing, compliance, customer service, and operations, freeing your teams to focus on work that requires human judgment.

Using machine learning and real-time data pipelines, we build systems that forecast demand, flag risk, optimize inventory, and surface strategic insights before your teams need to ask for them.

We design and build IoT platforms that turn physical devices into intelligent, connected systems with built-in real-time monitoring, remote management, and condition-based automation.

From data lakes and ETL pipelines to AI-ready cloud architecture, we build the foundation that makes everything else possible, scalable, reliable, and designed to grow with your business.

If you are looking to move from AI experimentation to AI-native operations,let’s talk.